Posted On May 12, 2026

How to Build an AI MVP Without Wasting Time and Budget

Everyone gets excited when they have an idea for an AI-powered product. The possibilities feel endless, the technology feels powerful, and it is tempting to just dive in and start building. But that excitement is also where a lot of businesses go wrong — they spend months and significant money developing something before ever checking whether real users actually want it.

That is exactly the problem an AI Minimum Viable Product, or MVP, is designed to solve. Build the essential thing first. Test it with real people. Learn what works and what does not. Then decide how far to take it.

Start With a Problem, Not a Technology

The most common mistake teams make when building AI products is falling in love with the technology before they have clearly defined the problem. They think about what AI can do and then try to find a use for it, rather than starting with a genuine user need and working backwards.

A strong AI MVP always begins with a specific, real problem. Not a vague ambition to “use AI to improve our business” — but a concrete challenge. Something like: customers are waiting too long for support responses, or users are dropping off because they cannot find relevant products quickly enough. When the problem is clear, the AI feature that addresses it becomes much easier to define.

Trying to build a sophisticated platform before you know exactly what problem you are solving is one of the fastest ways to burn through time and budget with nothing useful to show for it.

Define What Success Looks Like Before You Write a Single Line of Code

A well-defined goal does more than give developers direction — it acts as a filter. Every feature idea, every technical decision, every design choice can be measured against one simple question: does this help us validate our core idea?

If the answer is no, it does not belong in the MVP.

The purpose of a first version is not to be perfect. It is to find out whether the core concept works. That might mean building just one AI feature — an automated recommendation, a document summary tool, a basic chatbot — and nothing else. Keeping the goal focused keeps the budget in check and gets you to real feedback much faster.

Pick One High-Impact Feature and Build That Well

There is a natural temptation to pack an MVP with features. It feels like more features means more value, and more value means a better product. In reality, the opposite is usually true at this stage.

Every additional feature adds development time, introduces new ways things can go wrong, and delays the moment when real users can actually try what you have built. The smartest approach is to pick the one AI capability that delivers the most obvious and immediate value — and build that really well.

There is also no need to build everything from scratch. Pre-trained AI models and existing APIs for things like natural language processing, image recognition, or recommendation engines can handle the core functionality in a fraction of the time it would take to develop custom solutions. Use what already exists, test your idea, and build custom infrastructure only when you have proven there is genuine demand for it.

Validate Before You Go All In

Before committing serious resources to full development, test whether people actually want what you are building. This sounds obvious, but it is a step many teams skip because they are too eager to get started.

Validation does not require a finished product. A clickable prototype, a small beta launch with a limited group of users, or even a simple working demo can reveal a huge amount about whether the idea has real legs. The feedback you get at this stage is far more valuable than anything you could figure out sitting in a planning meeting.

Many products fail not because the technology was bad, but because nobody checked whether the product solved a problem users actually cared about. Catching this early — before the big investment — is the whole point.

Build Lean, Iterate Fast

Lean development is not about cutting corners. It is about being disciplined with what you build and when. Instead of spending six months developing a full-featured platform, you build the smallest useful version, release it, watch how people use it, and improve it in short, focused cycles.

This approach keeps costs manageable, keeps the team focused, and — most importantly — keeps real user behaviour at the centre of every decision. You are not guessing what users need. You are watching what they actually do and adjusting accordingly.

One thing worth getting right from the start, even in a lean MVP, is the underlying architecture. It does not need to be complex, but it does need to be clean and modular — built in a way that can grow without needing to be torn apart and rebuilt later. Cutting corners on structure early almost always leads to expensive problems down the road.

Data Quality Matters More Than Model Complexity

A lot of teams spend enormous energy debating which AI model to use, when the real issue is the quality of the data feeding into it. Even the most advanced model will produce poor results if the underlying data is messy, incomplete, or irrelevant.

From the very beginning, focus on collecting data that is clean, structured, and directly connected to what the product is trying to do. You do not need vast quantities of data at the MVP stage — you need the right data. Research consistently shows that improving data quality has a bigger impact on AI performance than swapping out models.

Avoid the Traps That Blow Up Budgets

Most AI development projects that go over budget do so for the same reasons. The scope keeps expanding. Features get added without clear justification. Weeks get spent building things users were never even asked about. And validation gets pushed to the end, by which point it is far too late and far too expensive to change direction.

The teams that stay on budget are the ones who resist the urge to solve every problem at once, who say no to features that do not serve the core goal, and who get user feedback early and often. One specific problem, solved well, tested quickly — that is the formula.

Keep Listening, Even After You Launch

Launching an MVP is not the finish line. It is the starting point for learning. Real users will use your product in ways you did not expect, hit problems you did not anticipate, and tell you — directly or through their behaviour — what actually matters to them.

The teams that succeed with AI products are the ones who treat that feedback as their most valuable resource. They update quickly, drop what is not working, double down on what is, and stay in close contact with the people they are building for. Waiting for a big, polished release before collecting feedback is a strategy that consistently leads to products that miss the mark.

Conclusion

Building an AI MVP successfully requires a balanced approach that focuses on solving real problems without unnecessary complexity. Businesses that use existing AI tools, prioritise quality data, maintain lean development processes, and collect early user feedback can reduce both development time and overall costs. Instead of building a fully advanced product immediately, companies should focus on validating the idea quickly and improving the product step by step.

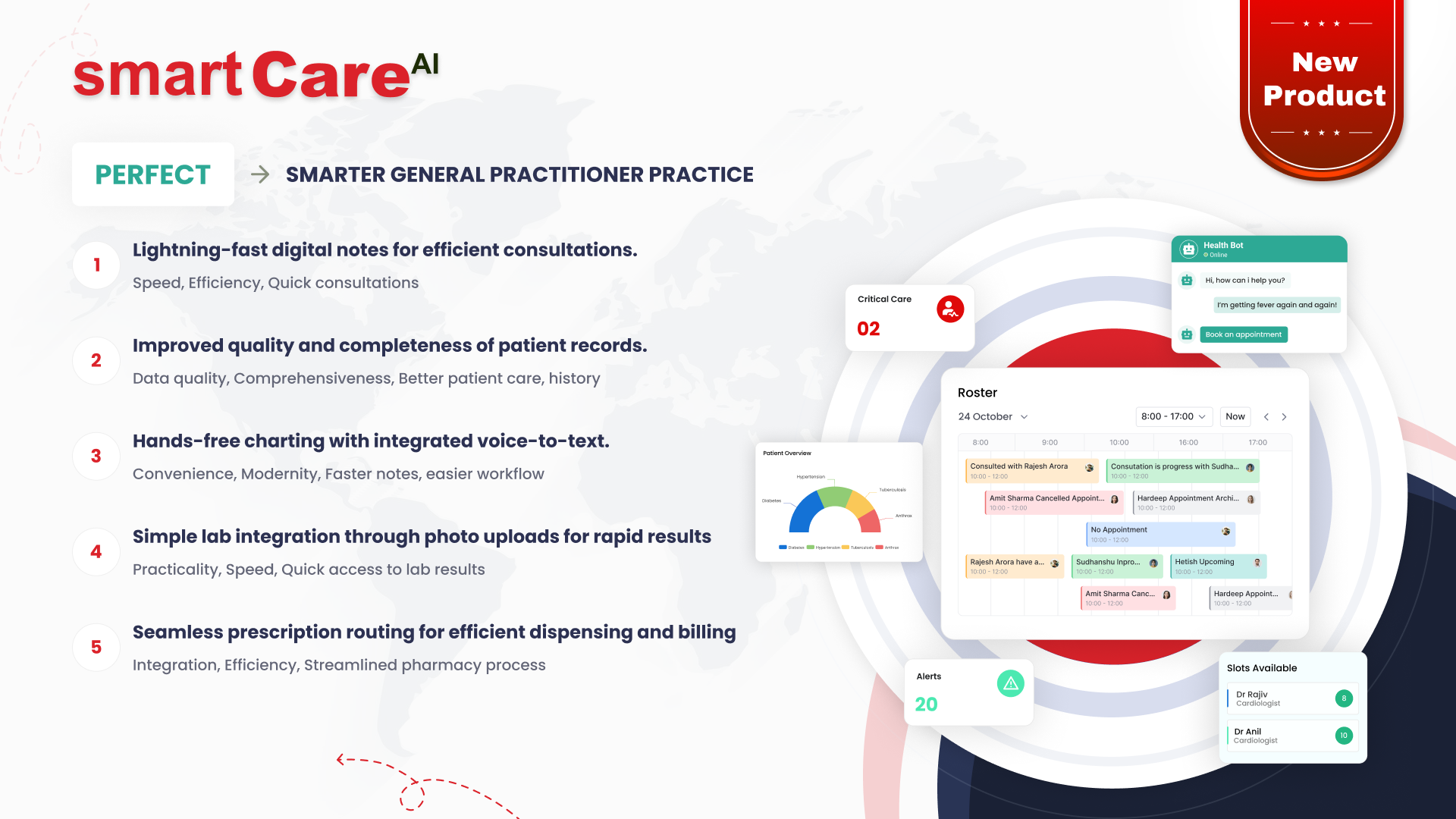

Organisations such as smartData support businesses with scalable AI MVP development strategies that help transform ideas into functional products while maintaining flexibility, speed, and long-term growth potential.